Auto-Tune — one of modern history’s most reviled inventions — was an act of mathematical genius.

The pitch correction software, which automatically calibrates out-of-tune singing to perfection, has been used on nearly every chart-topping album for the past 20 years. Along the way, it has been pilloried as the poster child of modern music’s mechanization. When Time Magazine declared it “one of the 50 worst inventions of the 20th century”, few came to its defense.

Apr 28, 2012 Live Banned Productions Presents, Live Banned in and as Live Banned - 'The Auto Tune' The Auto Tune Video by Live Banned is licensed under a. Auto-Tune Live is a program that features genuine Antares Auto-Tune technology optimized for real-time pitch correction. Live Fashion Spotlight 360° Video Browse channels Sign in to like videos, comment, and subscribe. Life in Auto-Tune remix of Madonna's 'Living For Love' from the album 'Rebel Heart'.

- Auto-Tune — one of modern history’s most reviled inventions — was an act of mathematical genius. The pitch correction software, which automatically calibrates out-of-tune singing to perfection, has been used on nearly every chart-topping album for the past 20 years.

- This is 'Antares Auto-Tune Live' by Antares Audio on Vimeo, the home for high quality videos and the people who love them. This is 'Antares Auto-Tune Live' by Antares Audio on Vimeo, the home for high quality videos and the people who love them.

But often lost in this narrative is the story of the invention itself, and the soft-spoken savant who pioneered it. For inventor Andy Hildebrand, Auto-Tune was an incredibly complex product — the result of years of rigorous study, statistical computation, and the creation of algorithms previously deemed to be impossible.

Hildebrand’s invention has taken him on a crazy journey: He’s given up a lucrative career in oil. He’s changed the economics of the recording industry. He’s been sued by hip-hop artist T-Pain. And in the course of it all, he’s raised pertinent questions about what constitutes “real” music.

The Oil Engineer

Andy Hildebrand was, in his own words, “not a normal kid.”

A self-proclaimed bookworm, he was constantly derailed by life’s grand mysteries, and had trouble sitting still for prolonged periods of time. School was never an interest: when teachers grew weary of slapping him on the wrist with a ruler, they’d stick him in the back of the class, where he wouldn’t bother anybody. “That way,” he says, “I could just stare out of the window.”

After failing the first grade, Hilbrebrand’s academic performance slowly began to improve. Toward the end of grade school, the young delinquent started pulling C’s; in junior high, he made his first B; as a high school senior, he was scraping together occasional A’s. Driven by a newfound passion for science, Hildebrand “decided to start working [his] ass off” -- an endeavor that culminated with an electrical engineering PhD from the University of Illinois in 1976.

In the course of his graduate studies, Hildebrand excelled in his applications of linear estimation theory and signal processing. Upon graduating, he was plucked up by oil conglomerate Exxon, and tasked with using seismic data to pinpoint drill locations. He clarifies what this entailed:

“I was working in an area of geophysics where you emit sounds on the surface of the Earth (or in the ocean), listen to reverberations that come up, and, from that information, try to figure out what the shape of the subsurface is. It’s kind of like listening to a lightning bolt and trying to figure out what the shape of the clouds are. It’s a complex problem.”

Three years into Hildebrand’s work, Exxon ran into a major dilemma: the company was nearing the end of its seven-year construction timeline on an Alaskan pipeline; if they failed to get oil into the line in time, they’d lose their half-billion dollar tax write-off. Hildebrand was enlisted to fix the holdup — faulty seismic monitoring instrumentation — a task that required “a lot of high-end mathematics.” He succeeded.

“I realized that if I could save Exxon $500 million,” he recalls, “I could probably do something for myself and do pretty well.”

A subsurface map of one geologic strata, color coded by elevation, created on the Landmark Graphics workstation (the white lines represent oil fields); courtesy of Andy Hildebrand

So, in 1979, Hildebrand left Exxon, secured financing from a few prominent venture capitalists (DLJ Financial; Sevin Rosen), and, with a small team of partners, founded Landmark Graphics.

At the time, the geophysical industry had limited data to work off of. The techniques engineers used to map the Earth’s subsurface resulted in two-dimensional maps that typically provided only one seismic line. With Hildebrand as its CTO, Landmark pioneered a workstation — an integrated software/hardware system — that could process and interpret thousands of lines of data, and create 3D seismic maps.

Landmark was a huge success. Before retiring in 1989, Hildebrand took the company through an IPO and a listing on NASDAQ; six years later, it was bought out by Halliburton for a reported $525 million.

“I retired wealthy forever (not really, my ex-wife later took care of that),” jokes Hildebrand. “And I decided to get back into music.”

From Oil to Music Software

An engineer by trade, Hildebrand had always been a musician at heart.

As a child, he was something of a classical flute virtuoso and, by 16, he was a “card-carrying studio musician” who played professionally. His undergraduate engineering degree had been funded by music scholarships and teaching flute lessons. Naturally, after leaving Landmark and the oil industry, Hildebrand decided to return to school to study composition more intensively.

While pursuing his studies at Rice University’s Shepherd School of Music, Hildebrand began composing with sampling synthesizers (machines that allow a musician to record notes from an instrument, then make them into digital samples that could be transposed on a keyboard). But he encountered a problem: when he attempted to make his own flute samples, he found the quality of the sounds to be ugly and unnatural.

“The sampling synthesizers sounded like shit: if you sustained a note, it would just repeat forever,” he harps. “And the problem was that the machines didn’t hold much data.”

Hildebrand, who’d “retired” just a few months earlier, decided to take matters into his own hands. First, he created a processing algorithm that greatly condensed the audio data, allowing for a smoother, more natural-sounding sustain and timbre. Then, he packaged this algorithm into a piece of software (called Infinity), and handed it out to composers.

A glimpse at Infinity's interface from an old handbook; courtesy of Andy Hildebrand

Infinity improved digitized orchestral sounds so dramatically that it uprooted Hollywood’s music production landscape: using the software, lone composers were able to accurately recreate film scores, and directors no longer had a need to hire entire orchestras.

“I bankrupted the Los Angeles Philharmonic,” Hildebrand chuckles. “They were out of the [sample recording] business for eight years.” (We were unable to verify this, but The Los Angeles Times does cite that the Philharmonic entered a 'financially bleak' period in the early 1990s).

Unfortunately, Hildebrand’s software was inherently self-defeating: companies sprouted up that processed sounds through Infinity, then sold them as pre-packaged soundbanks. “I sold 5 more copies, and that was it,” he says. “The market totally collapsed.”

But the inventor’s bug had taken hold of Hildebrand once more. In 1990, he formed his final company, Antares Audio Technology, with the goal of innovating the music industry’s next big piece of software. And that’s exactly what happened.

The Birth of Auto-Tune

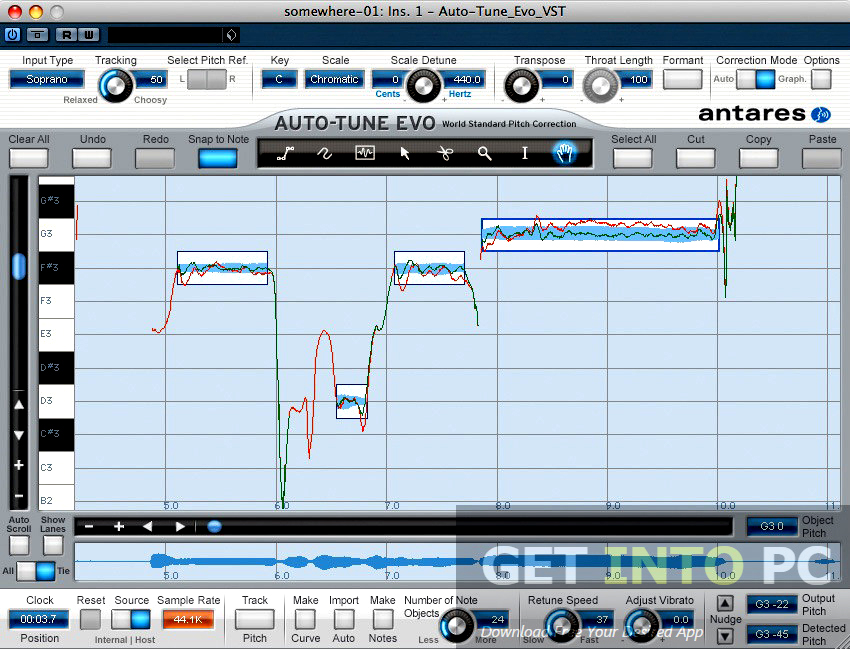

A rendering of the Auto-Tune interface; via WikiHow

At a National Association of Music Merchants (NAMM) conference in 1995, Hildebrand sat down for lunch with a few friends and their wives. Randomly, he posed a rhetorical question — “What needs to be invented?” — and one of the women half-jokingly offered a response:

“Why don’t you make a box that will let me sing in tune?”

“I looked around the table and everyone was just kind of looking down at their lunch plates,” recalls Hildebrand, “so I thought, ‘Geez, that must be a lousy idea’, and we changed the topic.”

Hildebrand completely forgot he’d even had this conversation, and for the next six months, he worked on various other projects, none of which really took off. Then, one day, while mulling over ideas, the woman’s suggestion came back to him. “It just kind of clicked in my head,” he says, “and I realized her idea might not be too bad.”

What “clicked” for Hildebrand was that he could utilize some of the very same processing methods he’d used in the oil industry to build a pitch correction tool. Years later, he’d attempt to explain this on PBS’s NOVA network:

'Seismic data processing involves the manipulation of acoustic data in relation to a linear time varying, unknown system (the Earth model) for the purpose of determining and clarifying the influences involved to enhance geologic interpretation. Coincident (similar) technologies include correlation (statics determination), linear predictive coding (deconvolution), synthesis (forward modeling), formant analysis (spectral enhancement), and processing integrity to minimize artifacts. All of these technologies are shared amongst music and geophysical applications.'

At the time, no other pitch correction software existed. To inventors, it was a considered the “holy grail”: many had tried, and none had succeeded.

The major roadblock was that analyzing and correcting pitch in real-time required processing a very large amount of sound wave data. Others who’d made an attempt at creating software had used a technique called feature extraction, where they’d identify a few key “variables” in the sound waves, then correlate them with the pitch. But this method was overly-simplistic, and didn’t consider the finer minutia of the human voice. For instance, it didn’t recognize dipthongs (when the human voice transitions from one vowel to another in a continuous glide), and, as a result, created false artifacts in the sound.

Hildebrand had a different idea.

As an oil engineer, when dealing with massive datasets, he’d employed autocorrelation (an attribute of signal processing) to examine not just key variables, but all of the data, to get much more reliable estimates. He realized that it could also be applied to music:

“When you’re processing pitch, you add wave cycles to go sharp, and subtract them when you go flat. With autocorrelation, you have a clearly identifiable event that tells you what the period of repetition for repeated peak values is. It’s never fooled by the changing waveform. It’s very elegant.”

While elegant, Hildebrand’s solution required an incredibly complex, almost savant application of signal processing and statistics. When we asked him to provide a simple explanation of what happens, computationally, when a voice signal enters his software, he opened his desk and pulled out thick stacks of folders, each stuffed with hundreds of pages of mathematical equations.

“In my mind it’s not very complex,” he says, sheepishly, “but I haven’t yet found anyone I can explain it to who understands it. I usually just say, ‘It’s magic.’”

The equations that do autocorrelation are computationally exhaustive: for every one point of autocorrelation (each line on the chart above, right), it might’ve been necessary for Hildebrand to do something like 500 summations of multiply-adds. Previously, other engineers in the music industry had thought it was impossible to use this method for pitch correction: “You needed as many points in autocorrelation as the range in pitch you were processing,” one early-1990s programmer told us. “If you wanted to go from a low E (70 hertz) all the way up to a soprano’s high C (1,000 hertz), you would’ve needed a supercomputer to do that.”

A supercomputer, or, as it turns out, Andy Hildebrand’s math skills.

Hildebrand realized he was limited by the technology, and instead of giving up, he found a way to work within it using math. “I realized that most of the arithmetic was redundant, and could be simplified,” he says. “My simplification changed a million multiply adds into just four. It was a trick — a mathematical trick.”

With that, Auto-Tune was born.

Auto-Tune’s Underground Beginnings

Hildebrand built the Auto-Tune program over the course of a few months in early 1996, on a specially-equipped Macintosh computer. He took the software to the National Association of Music Merchants conference, the same place where his friend’s wife had suggested the idea a year earlier. This time, it was received a bit differently.

“People were literally grabbing it out of my hands,” recalls Hildebrand. “It was instantly a massive hit.”

At the time, recording pitch-perfect vocal tracks was incredibly time-consuming for both music producers and artists. The standard practice was to do dozens, if not hundreds, of takes in a studio, then spend a few days splicing together the best bits from each take to a create a uniformly in-tune track. When Auto-Tune was released, says Hildebrand, the product practically sold itself.

With the help of a small sales team, Hildebrand sold Auto-Tune (which also came in hardware form, as a rack effect) to every major studio in Los Angeles. The studios that adopted Auto-Tune thrived: they were able to get work done more quickly (doing just one vocal take, through the program, as opposed to dozens) — and as a result, took in more clients and lowered costs. Soon, studios had to integrate Auto-Tune just to compete and survive.

Images from Auto-Tune's patent

Once again, Hildebrand dethroned the traditional industry.

“One of my producer friends had been paid $60,000 to manually pitch-correct Cher’s songs,” he says. “He took her vocals, one phrase at a time, transferred them onto a synth as samples, then played it back to get her pitch right. I put him out of business overnight.”

For the first three years of its existence, Auto-Tune remained an “underground secret” of the recording industry. It was used subtly and unobtrusively to correct notes that were just slightly off-key, and producers were wary to reveal its use to the public. Hildebrand explains why:

“Studios weren’t going out and advertising, ‘Hey we got Auto-Tune!’ Back then, the public was weary of the idea of ‘fake’ or ‘affected’ music. They were critical of artists like Milli Vanilli [a pop group whose 1990 Grammy Award was rescinded after it was found out they’d lip-synced over someone else’s songs]. What they don’t understand is that the method used before — doing hundreds of takes and splicing them together — was its own form of artificial pitch correction.”

This secrecy, however, was short-lived: Auto-Tune was about to have its coming out party.

The “Coming Out” of Auto-Tune

When Cher’s “Believe” hit shelves on October 22, 1998, music changed forever.

The album’s titular track -- a pulsating, Euro-disco ballad with a soaring chorus -- featured a curiously roboticized vocal line, where it seemed as if Cher’s voice were shifting pitch instantaneously. Critics and listeners weren’t sure exactly what they were hearing. Unbeknownst to them, this was the start of something much bigger: for the first time, Auto-Tune had crept from the shadows.

In the process of designing Auto-Tune, Hildebrand had included a “dial” that controlled the speed at which pitch corrected itself. He explains:

“When a song is slower, like a ballad, the notes are long, and the pitch needs to shift slowly. For faster songs, the notes are short, the pitch needs to be changed quickly. I built in a dial where you could adjust the speed from 1 (fastest) to 10 (slowest). Just for kicks, I put a “zero” setting, which changed the pitch the exact moment it received the signal. And what that created was the ‘Auto-Tune’ effect.”

Before Cher, artists had used Auto-Tune only supplementally, to make minor corrections; the natural qualities of their voice were retained. But on the song “Believe”, Cher’s producers, Mark Taylor and Brian Rawling, made a decision to use Auto-Tune on the “zero” setting, intentionally modifying the singer’s voice to sound robotic.

Cher’s single sold 11 million copies worldwide, earned her a Grammy Award, and topped the charts in 23 countries. In the wake of this success, Hildebrand and his company, Antares Audio Technologies, marketed Auto-Tune as the “Cher Effect”. Many people in the music industry attributed the artist’s success to her use of Auto-Tune; soon everyone wanted to replicate it.

“Other singers and producers started looking at it, and saying ‘Hmm, we can do something like that and make some money too!’” says Hildebrand. “People were using it in all genres: pop, country, western, reggae, Bollywood. It was even used in an Islamic call to prayer.”

The secret of Auto-Tune was out — and its saga had just begun.

The T-Pain Debacle

In 2004, an unknown rapper with dreads and a penchant for top hats arrived on the Florida hip-hop scene. His name was Faheem Rashad Najm; he preferred “T-Pain.”

After recording a few “hot flows,” T-Pain was picked out of relative obscurity and signed to Akon’s record label, Konvict Muzik. Once discovered, he decided he’d rather sing than rap. He had a great singing voice, but in order to stand out, he needed a gimmick -- and somewhat fortuitously, he found just that. In a 2014 interview, he explains:

“I used to watch TV a lot [and] there was always this commercial on the channel I would watch. It was one of those collaborative CDs, like a ‘Various Artists’ CD, and there was this Jennifer Lopez song, ‘If You Had My Love.’ That was the first time I heard Auto-Tune. Ever since I heard that song — and I kept hearing and kept hearing it — on this commercial, I was like, ‘Man, I gotta find this thing.’”

T-Pain — who is capable of singing very well naturally — decided to use Auto-Tune to differentiate himself from other artists. “If I was going to sing, I didn’t want to sound like everybody else,” he later toldThe Seattle Times. “I wanted something to make me different [and] Auto-Tune was the one.” He contacted some “hacker” friends, found a free copy of Auto-Tune floating around on the Internet, and downloaded it for free. Then, he says, “I just got right into it.”

An old Auto-Tune pamphlet; courtesy of Andy Hildebrand

Between 2005 and 2009, T-Pain became famous for his “signature” use of Auto-Tune, releasing three platinum records. He also earned a title as one of hip-hop’s most in-demand cameo artists. During that time, he appeared on some 50 chart-toppers, working with high-profile artists like Kanye West, Flo Rida, and Chris Brown. During one week in 2007, he was featured on four different Top 10 Billboard Hot 100 singles simultaneously. “Any time somebody wanted Auto-Tune, they called T-Pain,” T-Pain later told NPR.

His warbled, robotic application of Auto-Tune earned him a name. It also earned him a partnership with Hildebrand’s company, Antares Audio Technologies. For several years, the duo enjoyed a mutually beneficial relationship. In one instance, Hildebrand licensed his technology to T-Pain to create a mobile app with app development start-up Smule. Priced at $3, the app, “I Am T-Pain”, was downloaded 2 million times, earning all parties involved a few million dollars.

In the face of this success, T-Pain began to feel he was being used as “an advertising tool.”

'Music isn't going to last forever,' he toldFast Company in 2011, 'so you start thinking of other things to do. You broaden everything out, and you make sure your brand can stay what it is without having to depend on music. It's making sure I have longevity.'

So, T-Pain did something unprecedented: He founded an LLC, then trademarked his own name. He split from Antares, joined with competing audio company iZotope, and created his own pitch correction brand, “The T-Pain Effect”. He released a slew of products bearing his name — everything from a “T-Pain Engine” (a software program that mimicked Auto-Tune) to a toy microphone that shouted, “Hey, this ya boy T-Pain!”

Auto-tune Live Parent Directory 2017

Then, he sued Auto-Tune.

T-Pain vs. Auto-Tune: click to read the full filed complaint

The lawsuit, filed on June 25, 2011, alleged that Antares (maker of Auto-Tune) had engaged in “unauthorized use of T-Pain’s name” on advertising material. Though the suit didn’t state an exact amount of damages sought, it does stipulate that the amount is “in excess of $1,000,000.”

Antares and Hildebrand instantly counter-sued. Eventually, the two parties settled the matter outside of the court, and signed a mutual non-disclosure agreement. 'If you can't buy candy from the candy store,' you have to learn to make candy,' T-Pain later told a reporter. “It’s an all-out war.”

Of course, T-Pain did not succeed in his grand plan to put Auto-Tune out of business.

“We studied our data to see if he really affected us or not,” Hildebrand tells us. “Our sales neither went up or down due to his involvement. He was remarkably ineffectual.”

For Auto-Tune, T-Pain was ultimately a non-factor. More pressing, says Hildebrand, was Apple, which aquired a competing product in the early 2000s:

“We forgot to protect our patent in Germany, and a German company, [Emagic], used our technology to create a similar program. Then Apple bought [Emagic], and integrated it into their Logic Pro software. We can’t sue them, it would put us out of business. They’re too big to sue.”

But according to Hildebrand, none of this matters much: Antares’ Auto-Tune still owns roughly 90% of the pitch correction market share, and everyone else is “down in the ditch”, fighting for the other 10%. Though Auto-Tune is a brand, it has entered the rarified strata of products — Photoshop, Kleenex, Google — that have become catch-all verbs. Its ubiquitous presence in headlines (for better or worse) has earned it a spot as one of Ad Age’s “hottest brands in America.”

Yet, as popular as Auto-Tune is with its user base, it seems to be universally detested by society, largely as a result of T-Pain and imitators over-saturating modern music with the effect.

Haters Gonna Hate

A few years ago, in a meeting, famed guitar-maker Paul Reed Smith turned toward Hildebrand and shook his head. “You know,” he said, disapprovingly, “you’ve completely destroyed Western music.”

He was not alone in this sentiment: as Auto-Tune became increasingly apparent in mainstream music, critics began to take a stand against it.

In 2009, alternative rock band Death Cab For Cutie launched an anti-Auto-Tune campaign. “We’re here to raise awareness about Auto-Tune abuse” frontman Ben Gibbard announced on MTV. “It’s a digital manipulation, and we feel enough is enough.” This was shortly followed by Jay-Z’s “Death of the Auto-Tune” — a Grammy-winning song that dissed the technology, and called for an industry-wide ban. Average music listeners are no less vocal: a comb of the comments section on any Auto-Tuned YouTube video reveals (in proper YouTube form) dozens of virulent, hateful opinions on the technology.

Hildebrand at his Scotts Valley, California office

In his defense, Hildebrand harkens back to the history of recorded sound. “If you’re going to complain about Auto-Tune, complain about speakers too,” he says. “And synthesizers. And recording studios. Recording the human voice, in any capacity, is unnatural.”

What he really means to say is that the backlash doesn’t bother him much. For his years of work on Auto-Tune, Hildebrand has earned himself enough to retire happy — and with his patent expiring in two years, that day may soon come.

“I’m certainly not broke,” he admits. “But in the oil industry, there are billions of dollars floating around; in the music industry, this is it.”

He gestures toward the contents of his office: a desk scattered with equations, a few awkwardly-placed awards, a small bookcase brimming with Auto-Tune pamphlets and signal processing textbooks. It’s a small, narrow space, lit by fluorescent ceiling bulbs and a pair of windows that overlook a parking lot. On a table sits a model ship, its sails perfectly calibrated.

“Sometimes, I’ll tell people, ‘I just built a car, I didn’t drive it down the wrong side of the freeway,'” he says, with a smile. “But haters will hate.”

Our next post profiles an entrepreneur who wants to disrupt the only industry Silicon Valley won't touch: sex. To get notified when we post it →join our email list. A version of this article previously appeared on December 14, 2015.

Announcement: The Priceonomics Content Marketing Conference is on November 1 in San Francisco. Get your early bird ticket now.

Prior to the digital age, life in the studio was all about moderating the effects of human touch.

Compressors evened out the dynamics of the bass player while a side chain feed kept them matched with the drummer. The drummer had a metronome feed playing to maintain tempo.

Singers, well, you could keep their dynamics in control, but when they sang flat, about all you could do was tell them to smile as they sang and aim above the problem notes.

Little Snitch controls network traffic by registering kernel extensions through the standard (API) provided by Apple.If an application or process attempts to establish a network connection, Little Snitch prevents the connection. The dialog allows one to restrict the parameters of the connection, restricting it to a specific port, protocol or domain. /hider-2-vs-little-snitch.html. A dialog is presented to the user which allows one to deny or permit the connection on a one-time or permanent basis.

Smiling has the mysterious effect of raising singers' pitch. Aiming high is probably wishful thinking on everyone's part, but sometimes it works.

The Advent of Auto-Tune

You wouldn't think earthquakes have a lot to do with singing in pitch and they don't, really.

However, it was seismi c research that provided the background for Dr. Andy Hildebrand, the creator of Auto-Tune and its parent company Antares.

He left that field and returned to his early love of music, bringing knowledge that created seismic interpretation workstations and applied it to issues arising in the early days of digital music.

Hildebrand's expertise with digital signal processing led to a series of audio plug-ins, including 1997's Auto-Tune, which could correct the pitch of a voice or any single-note instrument with surprisingly natural results.

Audio engineers now had a weapon against the occasional bum note. Rather than scrapping an entire take, Auto-Tune offered a repair tool that quickly caught on.

Auto-Tune as an Effect

It was only a year later in 1998 that use of Auto-Tune as an effect rather than repair tool happened.

Called the 'Cher Effect' after the singer's hit, 'Believe,' artificial and abrupt pitch changes came into vogue. Later, real-time pitch correction hardware brought both effects and repairs to the stage.

In the studio, Auto-Tune proved another weapon to 'fix it in the mix.'

Issues with Auto-Tune started soon after, with lines drawn between the purist and users camps. Many felt that using pitch correction was an artistic cheat, a way to bypass craft.

The arguments resemble the resistance synthesizers received in the 1970s and 80s that led Queen to note that none were used on their albums. Cooking download video.

The other side of the argument pointed out that tools such as compressors and limiters and effects such as audio exciters had already been modifying the sound and behavior of voices throughout the history of recording. Though the anti-Auto-Tune camp seems vocal and large, rarely does a session go by without some use of pitch correction. It's nearly impossible to detect when used judiciously, nowhere near as obvious as when used for effect.

Auto-Tune is no longer the only player in the pitch correction game either. Celemony's Melodyne software substantially improves on Auto-Tune's interface and brought the full power of pitch correction to a plug-in ahead of the tool's originator, which still leads the pack when it comes to response and set-and-forget capability.

'Generic' Auto-Tune

The Antares version of the effect has achieved 'Kleenex' status. Its brand name is now synonymous with the generic effect it originated. It joins 'Pro Tools' from the audio world and 'Photoshop' from digital imaging in this manner.

Unlike some digital music signal processors, pitch correction hasn't generated a huge number of knock-offs. Melodyne is a serious contender, due to its far more intuitive interface. GSnap is an open source alternative that produces similar results. While iZotope's VocalSynth includes pitch correction features, it's more of a full vocal processor rather than a dedicated pitch correction app.

The 4 Best Auto-Tune VST Plugins

Now, lets get into the top 4 autotune plugins. Each one offers unique features and I assure you that one of these plugins have exactly what you are looking for

Antares Auto-Tune Vocal Studio

The originator is now a full-featured and functional vocal processor that still masters the innovative pitch correction duties it brought to the market, but adds a wide range of additional features and effects to help nail down the perfect vocal take.

Auto-Tune 7 forms the core of the Vocal Studio package, still tackling the pitch and time correction duties it always has. Since its earliest days, automatic and graphical modes handle the various chores for the main Auto-Tune module.

While still presenting a learning curve for the new user, the Auto-Tune 7 interface remains familiar enough for experienced users. Since it's the best-selling pitch correction software going -- and by a huge margin -- there are a lot of existing Auto-Tune users. Even if you're new to the plug-in, chances are you know someone who's used it.

The rest of the Vocal Studio package focuses on vocal manipulations such as automatic doubling, harmony generation, tube amp warmth and vocal timbre adjustment. The range and nature of these adjustments takes vocal processing into some new territory.

The MUTATOR Voice Designer lets you manipulate voices from subtle to extreme, permitting organic or alien manipulations but with results that still sound like voices, though perhaps not of this world. The ARTICULATOR Talk Box produces effects such as the guitar talk box of Peter Frampton and Joe Walsh, but also Alan Parsons-ish vocoder sounds, combining the features of sung or spoken voice with an instrument's output.

While the Auto-Tune Vocal Studio remains pricey, it remains at the top of a niche market of audio processing.

Melodyne 4 Studio

If Auto-Tune has a serious competitor in the pitch correction universe, it's Celemony's Melodyne. The interface, layout and operation of Melodyne is inherently more musical than the Antares take, so newcomers to pitch correction will likely find Melodyne easier to work with.

The Melodyne 'blob' is an easy to grasp analog of a sung note. It's far more intuitive than a waveform to understand. With the focus on graphical interface, Melodyne makes sense more quickly and easily than Auto-Tune. The latter's switching between automatic and graphical modes creates a comparative disconnect between functions.

Even long-time users of Auto-Tune will find moving to Melodyne natural, as there's enough in common that, once a user gets their bearings, familiar functions remain available.

Many Melodyne functions perform on polyphony too. Correcting a track with a multi-voice choir or chording instrument can work too. It's not a perfect function, but it's uncanny how often Melodyne senses chords clearly enough to allow changing of a single element.

What Melodyne doesn't do is the advanced vocal pyrotechnics offered by Auto-Tune. The Celemony product is all about pitch and time correction and it accomplished these with grace and ease.

Those looking for an affordable entry into digital pitch correction can turn to Melodyne 4 Essential. It's a plug-in that handles the pitch and time corrections of its big brother, but with fewer advanced features and without the full-featured price tag.

iZotope VocalSynth

Though pitch correction isn't the focus of this iZotope plug-in, it resembles the full Auto-Tune Studio package. At a fraction of the cost of the big boys in this class, VocalSynth doesn't offer the depth of control experienced with either Auto-Tune or Melodyne, yet it still manages to provide a reasonable job of pitch correction.

There's no graphical representation such as Melodyne's or Auto-Tune's graphical mode. That makes fine-tuning performances a little beyond the reach of VocalSynth, but for reasonable performances, it's not a major limitation. Think of the iZotope product as a first-aid kit rather than an emergency department.

The four voice synthesis modules are where the fun resides with VocalSynth. Talkbox, Compuvox, Polyvox and Vocoder modules emulate many of the vocal effects you've heard on hits from a wide range of artists. This is also just the most overt extra in the VocalSynth package.

A variety of additional modules let you tune up or tear up your vocal tracks. Add harmony, filter vocals, create radio and phone effects. These modules can either optimize your track or take it to new and exciting places.

VocalSynth may be the country cousin to the serious pitch manipulators, but it has capability with a high fun factor.

GVST GSnap

Don't let the download page fool you, GSnap is a VST plug-in that works with any DAW platform that supports VST, not simply Windows-based DAWs. Both 32 and 64-bit support is included. Completely free, it does come with limits. While there is more graphic information than iZotope offers, it doesn't offer direct edits.

While not as flexible as pro pitch correction, it's a low-cost alternative for users who can't swing the big time prices. It's difficult to use GSnap subtly. That's not an issue for those seeking pitch correction effects, such as Cher or T-Pain. Backup vocals are also a good candidate.

This is the entry level of pitch correction, and because of that, it's included here. The effect is so ubiquitous that anyone working in the field needs to know how it works. GSnap represents the place to start.

Wrapping It Up

Love it or hate it, pitch correction is here to stay, both as tool and effect. These four plug-ins aren't the only ones out there, but they represent the spectrum of pitch correction treatment. Auto-Tune is the originator. Melodyne is the refinement. It works just as well as the Antares product in nearly every way with an interface that easy to grasp.

iZotope VocalSynth represents the cream of the mid-priced plug-ins. It's capable and creative, even if it's not as flexible on pitch correction as the top-line apps. GSnap represents pitch correction for everyman. You can't knock the price of freeware.

The debate will likely rage over the ethics of pitch correction in popular music. While you wait for the dust to settle, give one of these packages a try.

Previous:

5 Holiday Gifts Musicians Will Go Crazy Over 2019Next:

Best Microphones for Recording Acoustic Guitars: 9 Mics to accomplish 3 Techniques